Overview

Datameer applies industry-standard data protection practices to ensure your data is protected from a disaster. Administrators can choose to apply any combination of our high availability, replication, and backup services to protect Datameer instances and provide recovery point objective (RPO) and recovery time objective (RTO) protection for internal customers.

Data and insight are the lifeblood of business operations today, so it’s essential that disaster recovery services are in place to protect your data from disasters ranging from disk and node failures to accidental data deletion and site failures.

To meet this need, Datameer offers high availability, replication, and backup services to protect your Datameer instance. You have the flexibility to decide which level of protection is best for your environment.

Proven Data Protection for Critical Components

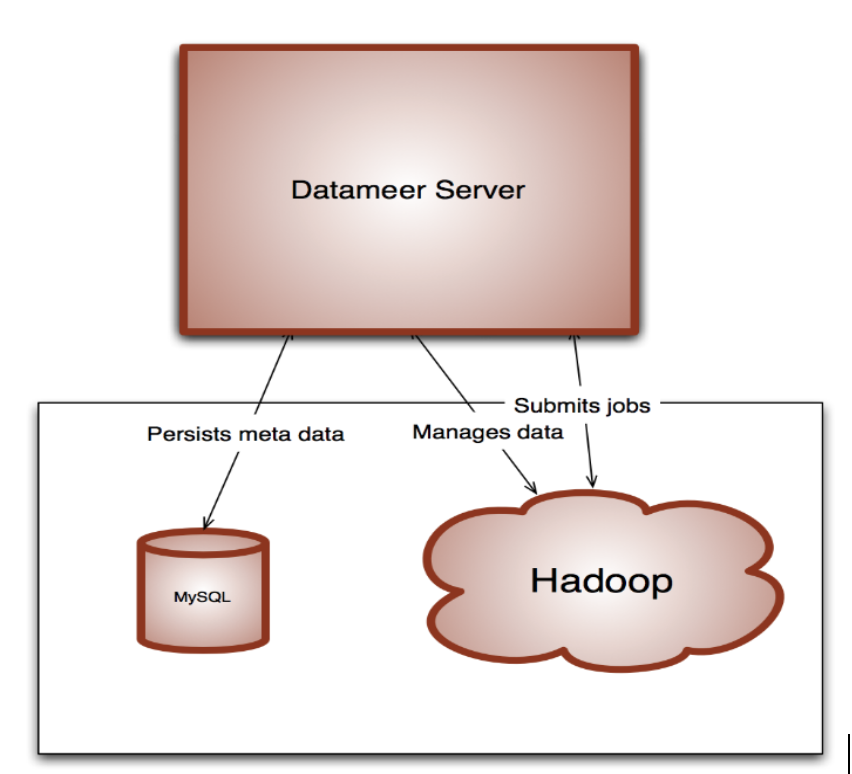

As shown in Figure 1, there are three main components of your Datameer installation that must be protected from potential disasters:

- Configuration files in the Datameer installation directory

- The Oracle® MySQL database instance containing related metadata

- Data files on the Hadoop Distributed File System (HDFS)

Figure 1: Topology of a Datameer installation

Configuration Files

To protect from disk and node failures, the configuration files in your Datameer installation directory – including any custom plug-in binaries – can be protected using a RAID volume or SAN attached storage. Data backups should also be performed regularly so that in the event data is accidentally deleted, you can restore it as needed.

Metadata

The metadata for a Datameer instance resides in a MySQL database instance. MySQL natively offers high availability and replication configuration options.

Additionally, there are native scripts to backup and recover a database instance, as needed.

Data Files on HDFS

Datameer uses Hadoop to store imported data and the results of analyses. Because Hadoop is a distributed system designed to tolerate disk and node failures, it’s designed from the ground up to protect your data. Hadoop can be enhanced using Apache™ Falcon to provide enhanced data management capabilities, including replication. In addition, you can configure Hadoop’s file system trash interval to protect your data from accidental deletion.

Recovery from Inconsistent States

Sometimes only one of the three components of an installation fails and the others do not. For example, suppose a MySQL database instance fails, but the HDFS data and configuration files are healthy. This failure can result in your system falling into an inconsistent state.

In this scenario, after recovering the MySQL instance, it is possible that a job’s results stored on the HDFS are no longer visible in Datameer – for example, if the metadata for the job was not included in the current backup. When recovering from a failure, the best practice is to ensure that all three components (as described previously) are recoverable to a consistent time period – not just the component with the impacted job. This best practice minimizes the need to manually synchronize the components after recovery.

With Datameer, you can bring all of the components of your system into a consistent state by reprocessing the input data (i.e., your Datameer workbook). In this case, the impacted job needs to be rerun to complete the disaster recovery.

Comments

0 comments

Please sign in to leave a comment.